Introduction

These organizations use Kafka to manage “streams” of data, which have become prevalent as internet usage massively boosts the amount of data being generated and needing to be processed. Kafka allows these huge volumes of data to be processed in real-time, via a combination of “producers” and “consumers”, which work with a Kafka “cluster” – the main data repository.

In the NonStop space it’s important to understand Kafka, and how we might easily integrate our NonStop applications, and their valuable data, with the Kafka ecosystem. There are a few approaches that allow NonStop data to be streamed to Kafka, including ODBC/JDBC, and the Striim product. This article will go into detail on a new product that achieves this goal in a highly performant and fault-tolerant manner.

uLinga for Kafka – Overview

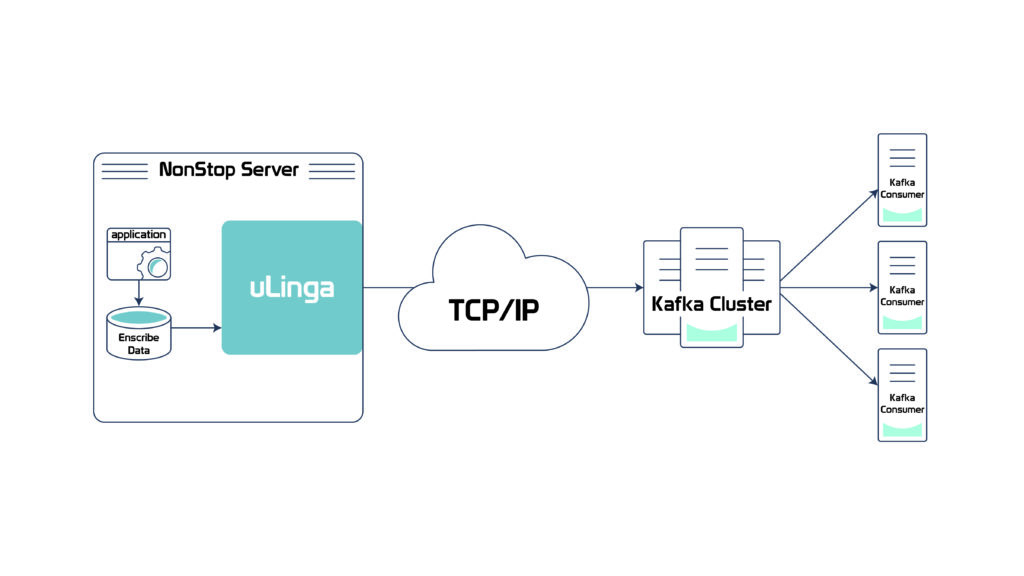

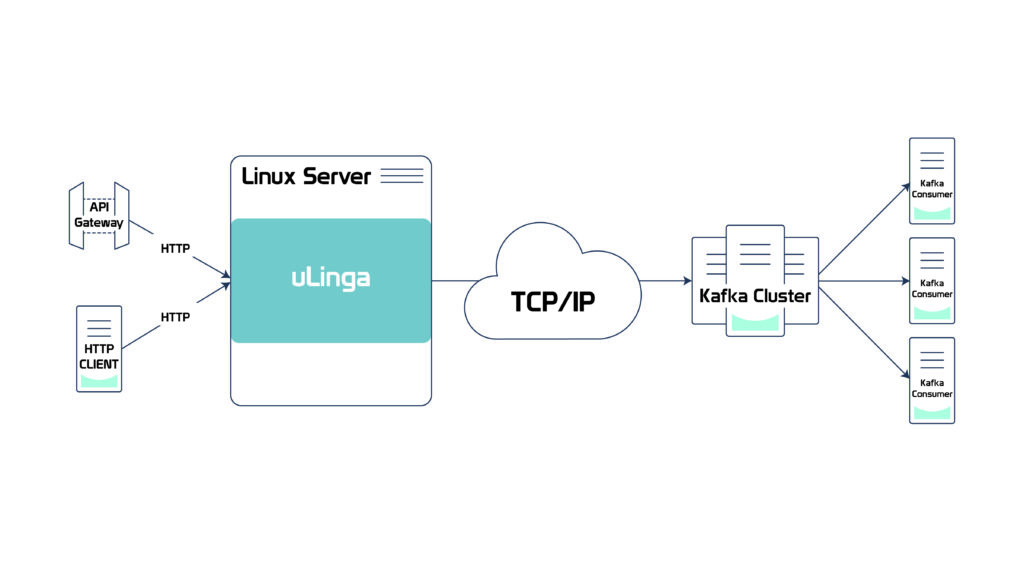

uLinga for Kafka takes a unique approach to Kafka integration: it runs as a natively compiled Guardian process pair, and supports the Kafka communications protocols directly over TCP/IP. This removes the need for Java libraries or intermediate databases, providing the best possible performance on NonStop. It also allows uLinga for Kafka

to directly communicate with the Kafka cluster, getting streamed data across as quickly and reliably as possible.

Other NonStop Kafka integration solutions require an interim application and/or database, generally running on another platform. This can be less than ideal as that additional platform may not have the reliability of the NonStop, and could

introduce a single point of failure. It can also increase latency, in terms of getting the data into Kafka as quickly

as possible.

uLinga for Kafka – Application Integration

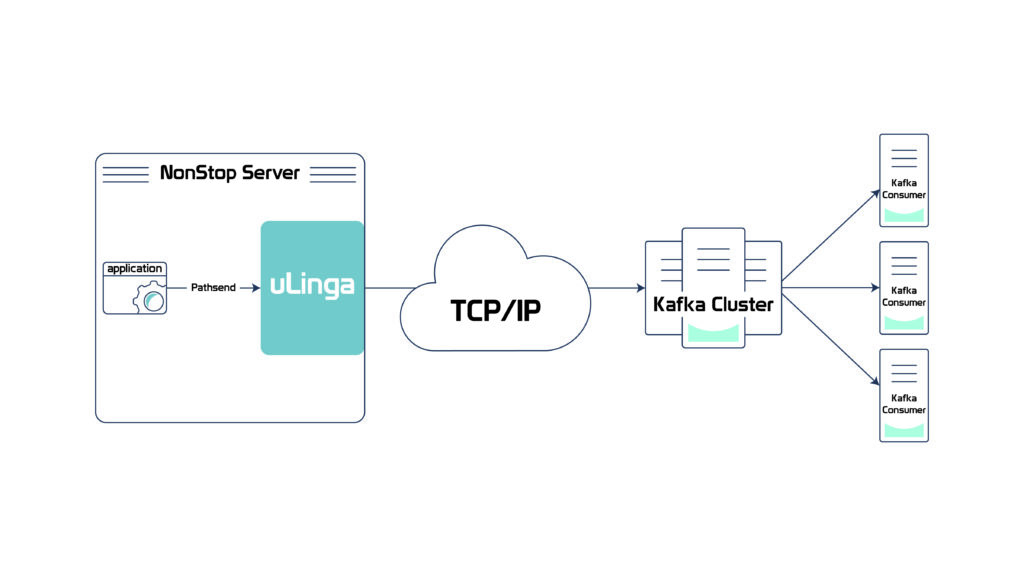

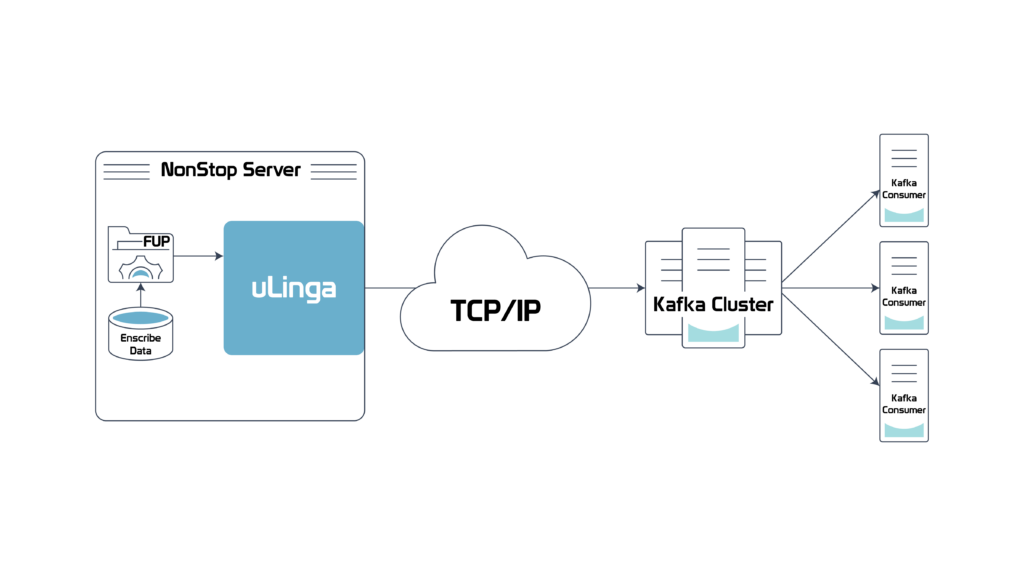

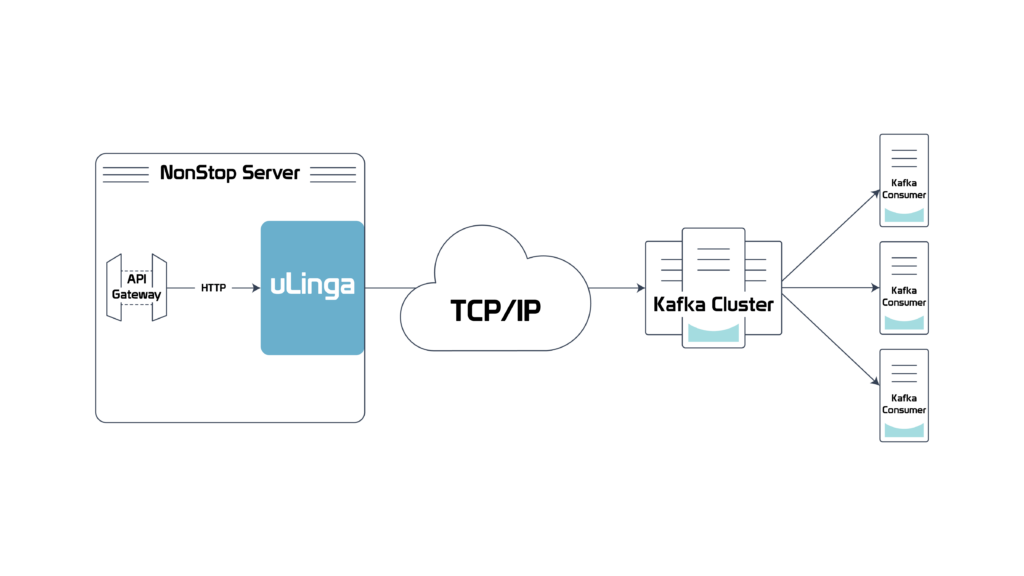

uLinga for Kafka supports the same access points available with other uLinga products to enable applications to stream their data to Kafka. These include Inter-Process Communication (IPC), Pathsend and HTTP/REST. IPC and Pathsend interfaces allow NonStop applications to open uLinga for Kafka and send data via Guardian IPC, or via a Pathsend message. The HTTP/REST interface allows applications on the NonStop or on any other platform, such as API gateways, to stream via HTTP to Kafka. uLinga for Kafka also introduces a new access point which allows for Enscribe files to be monitored and read in real-time as they are being written by an application.

The following sections explain each of these use cases in more detail.

Entry-Sequenced and Relative Enscribe File Support

In this use case, an entry-sequenced or relative file like a transaction log file from a payment application, or an access log for an in-house application, can be supported by uLinga for Kafka. uLinga for Kafka performs an “end-of-file chase” whereby new records are read from the entry-sequenced file as they are written by the application, and immediately streamed to the Kafka cluster. uLinga for Kafka monitors for the creation of new files and automatically picks them up and starts processing them. uLinga for Kafka can also process files that already exist, and in our in-house testing we’ve seen excellent performance – more than 10,000TPS on our NonStop X3 server.

Pathsend and Guardian IPC Support

Applications can also explicitly send data via the Pathsend and IPC interfaces provided by uLinga for Kafka. This might be useful where specific data streams need to be generated and sent directly from the application. A Pathsend client, such as a Pathway Requestor or Pathway Server, simply sends the relevant data via a Pathsend request to uLinga. A NonStop process sends the data to uLinga for Kafka via IPC calls. uLinga for Kafka’s support for the native Kafka protocols ensures that this data will be streamed with the lowest latency possible.

It can also be used to send any Enscribe files to Kafka, via utilities such as FUP. A FUP COPY command can specify uLinga as the output process, directing all data to Kafka. The command would look something like:

FUP COPY $INFRA.DATA.DATAFILE, $ULKAF.#KAFKA1

NonStop Application Streaming via HTTP/REST

Another possible deployment is in conjunction with an API gateway, such as the new product recently launched by HPE. API gateways, with the central position they occupy within the enterprise, will often produce data that will need to be streamed to Kafka. Administrators of an API gateway, which of course is inherently REST-capable, might decide to utilize the REST interface into uLinga for Kafka – it’s simple, it’s well-understood, and it will work “out of the box” with a REST-capable client.

uLinga for Kafka, like all uLinga products, runs on other enterprise platforms, including Linux and Unix, and can provide these same HTTP/REST features to any type of HTTP client on any platform.

Performance

As touched on above, because uLinga for Kafka is written in C, and directly implements the Kafka wire protocols, it is not reliant on any additional software and we are seeing some excellent performance figures in our labs. Straight throughput of over 10,000TPS is easily possible. To look at the performance in another way, we’ve tested at a constant transaction rate of 100TPS, and uLinga for Kafka required approximately 1% of a NonStop X NS3 CPU to process that transaction volume. Latency is sub-second. Please note, as always, that performance can and will vary based on a number of environmental factors, and CPU usage is not linear with transaction volume.

uLinga for Kafka – What’s Next?

uLinga for Kafka is now available for beta test partners, so please reach out if you’d like to know more. We are adding new features to the product, and beta partners have the opportunity to help shape the product direction. We’re considering support for the NonStop Event Management Subsystem (EMS) to allow EMS events to be streamed directly to Kafka, and also for the Transaction Management Facility (TMF) to allow TMF audit trails to be streamed. There are many other potential features, so please let us know if you’d like to see a different data source or other feature supported.

We also plan to make uLinga for Kafka act as a Kafka Consumer, meaning that data in Kafka clusters will be easily accessible to NonStop applications. Once again, let us know if you’d like to know more.

uLinga for Kafka will be presented and demonstrated at TBC this year, so keep an eye on the program, leave a comment below, or contact productinfo@infrasoft.com.au for more information.